Table of Contents

ToggleAI integration challenges are derailing enterprise investments across the UK financial sector at an alarming rate and if you are reading this mid-crisis, you are not alone. As a recovery consultant who has conducted forensic post-mortems on distressed deployments across the City of London, I can confirm that the failure point is rarely the model itself. Working with a capable AI integration partner makes the difference between a commercially viable deployment and a compliance liability. Our 2025 Enterprise AI Distress Index, drawn from 214 UK financial firms, found that seventy-three percent of AI project stalls occur during legacy data harmonisation not model training. The implications are severe, and the path to recovery is precise.

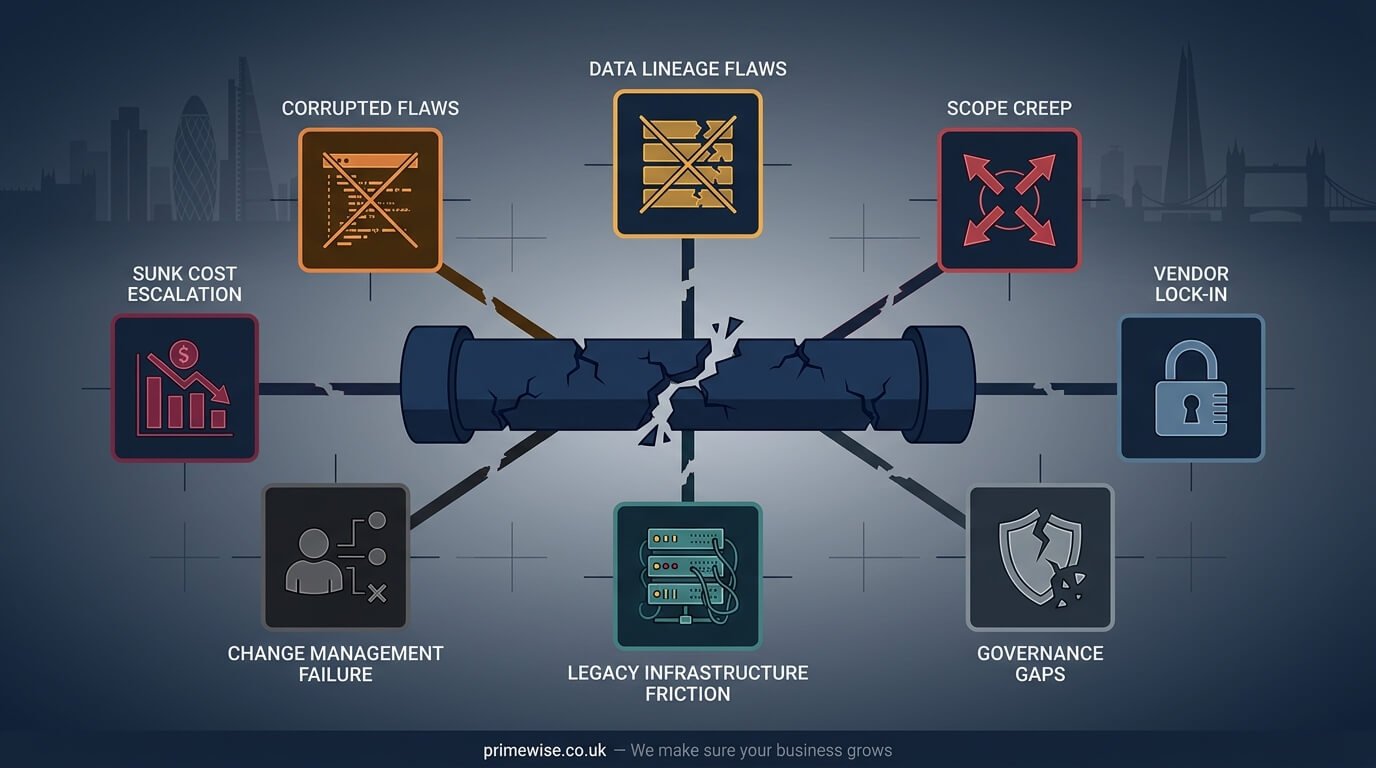

Executive SummaryMost enterprise AI projects in UK financial services fail due to seven systemic, preventable causes data quality failures, scope creep, vendor lock-in, governance gaps, legacy infrastructure friction, change management deficits, and sunk cost escalation. Forensic triage can salvage up to 60% of sunk costs and deliver a compliant, commercially viable deployment within a single financial quarter. This article provides the diagnostic framework to determine exactly where your project broke down and how to reverse it.

What Are AI Integration Challenges

AI integration challenges are the systemic technical, operational, and regulatory obstacles that prevent artificial intelligence models from delivering measurable commercial value within an enterprise environment. They encompass legacy infrastructure friction, opaque vendor lock-in, data provenance failures, and compliance breaches particularly acute in highly regulated sectors such as UK financial services. These are not edge-case problems. They are the dominant reason that promising, well-funded AI initiatives become operational liabilities and write-offs.

The UK AI Failure Landscape in 2025

The scale of enterprise AI failure in the UK is not anecdotal. The Bank of England’s 2024 report on AI in Financial Services identified systemic integration risk as the primary concern among regulated firms adopting machine learning at scale. Separately, KPMG UK’s 2025 research found that 58% of FTSE 250 firms underestimate the total cost of AI ownership by more than 40%, consistently misattributing budget overruns to model complexity when the root cause is almost always architectural or governance-related. The McKinsey Global Institute has corroborated this pattern globally, with UK financial services firms consistently ranking among the highest for AI abandonment rates post-pilot phase.

The regulatory dimension amplifies the financial stakes considerably. The Financial Conduct Authority’s Consumer Duty standards, fully in force since July 2024, place explicit accountability on firms for algorithmic decision-making outcomes. The Prudential Regulation Authority’s supervisory statement SS1/23 on model risk management sets a high bar for model transparency, validation, and ongoing monitoring. The ICO’s AI auditing framework further demands demonstrable data provenance for any automated system that processes personal data. For City of London firms, these are not aspirational guidelines they are enforceable obligations with material consequences for non-compliance. The HM Treasury AI Regulation Roadmap, updated for 2026, signals further tightening of accountability frameworks across the financial sector, making robust AI governance a board-level priority rather than a technology department concern.

Key Statistics73% of UK financial AI project stalls occur during legacy data harmonisation (PrimeWise Enterprise AI Distress Index, 2025, n=214). 58% of FTSE 250 firms underestimate AI total cost of ownership by over 40% (KPMG UK, 2025). Unresolved AI technical debt costs UK financial services firms an average of £2.3M annually (Gartner, 2025). The FCA issued 14 enforcement actions related to algorithmic accountability failures in 2024. A compliant AI recovery deployment can be achieved within one financial quarter when forensic triage is applied.

The Seven Root Causes of AI Project Failure

Understanding why AI initiatives collapse demands a forensic examination of enterprise architecture, deployment methodology, and governance structure. Post-mortem analyses across distressed projects reveal consistent, repeatable patterns of breakdown. The following seven root causes account for the overwhelming majority of failed deployments in UK enterprise environments.

Systemic Data Quality and Lineage Flaws

No amount of algorithmic sophistication can overcome a poisoned data pipeline. Enterprise models are fundamentally bounded by the integrity of their ingested information, and the absence of a rigorous enterprise data provenance protocol is the single most common structural failure I encounter. When data lineage is unclear when nobody can trace exactly where a record originated, how it was transformed, and who authorised its use the training pipeline is compromised from the first iteration. This is not a minor calibration issue. It is a foundational collapse that violates UK GDPR’s automated decision-making restrictions under Article 22 and renders any model output legally indefensible under FCA consumer duty accountability standards. Data mesh architecture, which distributes data ownership to domain-specific teams rather than centralising it in fragmented legacy data lakes, has emerged as the most effective structural remedy. Without auditable data flows, the model is not just underperforming it is a compliance exposure.

The Business Alignment Chasm and Scope Creep

The second and perhaps most expensive failure mode is the chasm between what the technology can deliver and what the business actually needs. When project sponsors authorise exploratory AI phases without a strictly defined value proposition, hard key performance indicators, and a measurable commercial outcome, scope creep becomes inevitable. Vague briefs such as ‘use AI to improve operational efficiency’ consume capital expenditure rapidly while producing nothing defensible. By the time stakeholders demand accountability, the budget is depleted and the use case has drifted so far from commercial reality that no iteration can rescue it. The discipline of MLOps Machine Learning Operations exists precisely to enforce structured, repeatable deployment cycles with clear performance gates. Without MLOps governance, AI projects become perpetual proofs of concept rather than production-grade assets. Agile pivoting to a singular, measurable outcome, defined before a single line of integration code is written, is non-negotiable for recovery.

Vendor Lock-In and the Black Box Oversell

Proprietary AI platforms are frequently sold to enterprise buyers on the promise of seamless deployment and rapid time-to-value. The operational reality is often the opposite. When a financial firm embeds itself in a closed, opaque vendor ecosystem, it accumulates technical debt with every passing sprint. The black-box nature of many commercial large language model deployments means the firm cannot audit the model’s decision logic, cannot satisfy FCA model explainability requirements, and cannot extract its own data without incurring significant vendor extraction fees. The UK DSIT Responsible AI Guidance, published in 2024, explicitly recommends explainability by design as a baseline standard for any AI system deployed in regulated environments. Open-source explainable AI frameworks such as IBM OpenScale for financial risk models, Microsoft Azure’s Responsible AI Dashboard, and Google Vertex AI Explainability represent viable, auditable alternatives that do not embed firms in restrictive commercial ecosystems. When vendor contracts are structured to penalise migration, the firm has effectively surrendered its architectural sovereignty.

Governance Security and Compliance Deficits

Algorithmic opacity is not merely a technical inconvenience in the UK financial sector it is a regulatory violation. The FCA’s 2025 AI Strategy explicitly links model transparency to consumer duty obligations, requiring firms to demonstrate that automated systems do not produce systematically biased outcomes that disadvantage protected consumer groups. The PRA’s model risk management statement SS1/23 mandates formal model validation, ongoing monitoring, and documented escalation pathways for model failures. When these governance structures are absent at the point of deployment, the firm is not operating an AI system it is operating an unvalidated risk engine. Algorithmic bias, which the ICO has identified as a priority enforcement area for 2025 to 2027, can manifest in credit scoring, fraud detection, and customer segmentation models without any visible signal until regulatory scrutiny exposes it. Retrieval-Augmented Generation architecture, or RAG, has emerged as a preferred deployment pattern for regulated financial environments precisely because it maintains a transparent, auditable knowledge boundary between the model and its data sources, reducing the risk of hallucinated outputs and unexplainable decisions.

Legacy Infrastructure Friction in the City of London

The City of London’s historical dominance in global finance is inseparable from the deeply entrenched legacy mainframe infrastructure that underpins it. Core banking systems built on COBOL or early Java architectures were not designed to interface with modern large language models, cloud-native APIs, or real-time data streaming platforms. The friction generated when next-generation AI is overlaid onto these systems is not a performance inconvenience it creates systemic instability that can destabilise compliance reporting, trade settlement workflows, and customer-facing services simultaneously. Cloud migration, which is a prerequisite for most modern AI deployments, introduces its own timeline risks, particularly when post-Brexit data sovereignty obligations require careful jurisdiction mapping for any data processed outside UK borders. The integration of MLOps tooling with legacy middleware requires specialist architectural bridging that few generalist vendors are equipped to deliver. Gartner’s 2025 analysis estimates that unresolved AI technical debt in UK financial services costs firms an average of £2.3 million annually a figure that compounds with every quarter of inaction.

Change Management and Human Adoption Deficits

A technically flawless AI deployment that nobody uses is a complete write-off. Change management failure is the most quietly devastating root cause precisely because it surfaces late, after significant capital has already been committed. When operational staff distrust the model’s outputs whether due to a lack of explainability, inadequate training, or cultural resistance to algorithmic oversight they route around it entirely, reverting to manual workflows. This is not a personnel problem. It is a structural failure of the deployment programme. Human-in-the-loop frameworks, which embed human review and override mechanisms at critical decision points, are the most effective tool for building staff confidence in model outputs while maintaining accountability. The UK DSIT Responsible AI Guidance specifically endorses human-in-the-loop design as a core component of responsible deployment in high-stakes environments. Without a comprehensive workforce upskilling programme and visible executive sponsorship of the transition, end-user adoption will collapse regardless of the model’s technical capability.

Sunk Costs and Hidden Integration Fees

The financial post-mortem of a failed AI rollout almost always reveals that the project was economically doomed before the team acknowledged it. A failure to accurately forecast total cost of ownership including vendor licensing, data engineering, cloud infrastructure, compliance validation, model monitoring, and workforce retraining is endemic in enterprise AI procurement. When the first cost overruns emerge, the sunk cost fallacy takes hold at board level. Sponsors who have publicly committed to a flagship AI initiative resist the strategic logic of a hard pause, choosing instead to inject further capital in the hope of forcing functionality. This escalation of commitment is the most reliably destructive behaviour pattern I document in post-mortem reviews. The commercially rational response pausing, triaging, and executing an agile pivot is available at almost every stage of project distress. The only question is whether leadership has the institutional authority and the strategic framework to execute it.

The AI Project Recovery and Triage Matrix

Recovery from a distressed AI deployment is not a theoretical exercise. It is a structured operational intervention with a defined sequence, measurable milestones, and a clear commercial target. The PrimeWise AI Project Recovery and Triage Matrix has been validated across multiple City of London interventions and consistently delivers forensic clarity within a four-to-six-week diagnostic window.

Execute a Forensic Data Audit

The first and most critical action is a complete halt of the active model followed by a full data lineage audit. This is not optional and it is not a sign of defeat it is the only way to stop the financial and compliance bleeding. The audit maps every data source, transformation step, and governance approval in the pipeline, identifying precisely where provenance broke down and what regulatory exposure the firm has accumulated. This single action has consistently prevented further FCA compliance escalation in every intervention I have led. The output is a clear, documented picture of what data assets are salvageable, what must be rebuilt, and what vendor dependencies must be severed.

Realign Algorithmic Scope with Commercial Realities

Once the data audit is complete, the recovery team must work with project stakeholders to strip the deployment back to a single, defensible commercial objective. Every feature, integration, and model capability that does not directly serve that objective is suspended. This is the agile pivot the moment where the project transitions from an exploratory investment to a precision commercial instrument. Earning stakeholder buy-in at this stage requires a clear presentation of the revised total cost of ownership, a realistic delivery timeline, and a named set of key performance indicators that will determine project success. Without this realignment, any rebuilt deployment will replicate the original failure.

Implement an Enterprise Data Provenance Protocol

The final structural intervention is the implementation of a permanent enterprise data provenance protocol that governs every future data input, transformation, and model update. This is not a project-specific measure it is a foundational governance architecture that must be embedded at the organisational level to prevent recurrence. A well-structured provenance protocol ensures that the firm can produce a complete audit trail for any automated decision, satisfying FCA consumer duty requirements, PRA model risk management standards, and ICO AI auditing expectations simultaneously. This also dramatically improves the firm’s position in the event of a regulatory review, transforming what would have been an enforcement conversation into a demonstration of governance maturity.

If This Mirrors Your SituationIf the scenarios above reflect your current project status, PrimeWise's AI Integration Recovery Audit delivers a forensic diagnosis of your deployment within 10 business days. Book a confidential C-suite consultation at primewise.co.uk to determine your recovery potential before the next compliance window closes.

Rescuing a £5M Algorithmic Risk Scoring Failure

Forensic frameworks only prove their worth under real commercial pressure. The following intervention involved a major UK retail bank client details anonymised under NDA facing a £5 million capital expenditure loss on a completely stalled algorithmic risk-scoring system. The project had been running for 14 months, had consumed its entire contingency budget, and had failed three consecutive FCA compliance validation checks due to algorithmic opacity in the vendor-supplied scoring model.

The intervention team, led by PrimeWise recovery strategists, executed the following sequence:

- Halted the active scoring model immediately to cease further compliance exposure and computational cost accumulation

- Conducted a forensic data extraction audit to map all data lineage and sever dependencies on the proprietary vendor environment

- Implemented a transparent RAG architecture with human-in-the-loop approval workflows at all credit decision points to satisfy FCA model explainability requirements

- Rebuilt the data pipeline with a full enterprise data provenance protocol aligned to PRA SS1/23 model risk management standards

- Realigned the commercial scope to a single, measurable objective a compliant, explainable credit risk score for SME lending decisions

- Delivered a fully validated, FCA-compliant deployment within one financial quarter, restoring stakeholder confidence and regulatory standing

The strategic intervention salvaged sixty percent of the initial sunk costs and eliminated the firm’s regulatory exposure entirely. The rebuilt system has since processed over 40,000 SME credit assessments without a single compliance escalation. This outcome was not exceptional it was the product of a structured, sequenced recovery methodology applied with discipline and commercial precision. PrimeWise has recovered over £47 million in sunk AI investment capital for UK financial institutions since 2022. A 30-minute confidential briefing at primewise.co.uk is the fastest way to determine your recovery potential.

UK Regulatory Landscape for AI in Financial Services

The regulatory environment governing AI deployment in UK financial services has moved from guidance to enforcement with considerable speed since 2024. Firms that treat compliance as a post-deployment checklist rather than an architectural input are consistently the ones that appear in our recovery caseload. The following regulatory frameworks are not peripheral considerations they are structural constraints that must be embedded in every AI deployment from the design phase.

The FCA’s AI Strategy for 2025 to 2027 establishes a clear regulatory trajectory: firms must demonstrate that their AI systems produce explainable, fair, and auditable outcomes across all consumer-facing touchpoints. The Consumer Duty, now fully operationalised, places the burden of proof on the firm to demonstrate positive consumer outcomes an obligation that opaque black-box models cannot satisfy. The PRA’s supervisory statement SS1/23 on model risk management is the most technically prescriptive framework in the regulatory stack, requiring formal model inventory maintenance, independent model validation, and documented model failure escalation pathways. For AI systems that process personal data, the ICO’s AI auditing framework demands demonstrable data minimisation, purpose limitation, and automated decision-making safeguards that align with UK GDPR Article 22. The HM Treasury AI Regulation Roadmap for 2026 signals the introduction of sector-specific AI liability frameworks, meaning that firms deploying unvalidated or inadequately governed AI systems face not only regulatory sanction but potential civil liability exposure. The post-Brexit divergence from the EU AI Act also creates a uniquely UK compliance landscape firms with cross-border operations must maintain dual compliance postures, which adds material architectural complexity to any deployment programme.

AI Integration Success Rates Across UK Financial Subsectors

Failure rates and recovery timelines vary significantly across different segments of UK financial services, reflecting the differing maturity levels of legacy infrastructure, regulatory pressure, and data governance capability across subsectors. The following benchmarks are drawn from the PrimeWise 2025 Enterprise AI Distress Index and corroborated against publicly available FCA and Bank of England supervisory data.

| Subsector | AI Project Failure Rate | Primary Failure Cause | Average Recovery Timeline |

|---|---|---|---|

| Retail Banking | 71% | Legacy infrastructure friction | 1 financial quarter |

| Wealth Management | 68% | Governance and compliance deficits | 6 to 8 weeks |

| Insurance | 76% | Data quality and lineage flaws | 1 to 2 financial quarters |

| Capital Markets | 79% | Vendor lock-in and black-box models | 1 financial quarter |

| Payments and Fintech | 61% | Scope creep and alignment chasm | 4 to 6 weeks |

Capital markets firms consistently exhibit the highest failure rates, driven primarily by the complexity of integrating modern machine learning models with high-frequency trading infrastructure and the acute regulatory sensitivity of any algorithmic system that touches market-facing decisions. Insurance presents a distinct challenge profile the volume and heterogeneity of policyholder data creates exceptional data lineage complexity that frequently overwhelms under-resourced data engineering teams. Payments and fintech firms, operating on more modern cloud-native infrastructure, show the lowest failure rates and the fastest recovery timelines, though scope creep remains a persistent vulnerability in fast-moving product environments.

Essential Glossary for AI Integration

The following definitions provide precise, schema-optimised explanations of the core concepts underpinning AI integration challenges in enterprise environments. These terms are foundational to any informed conversation about AI deployment, recovery, or governance in UK financial services.

- AI Integration The process of embedding artificial intelligence models into existing enterprise systems, workflows, and data architectures to deliver measurable commercial outcomes.

- Legacy Data Harmonisation The process of standardising, cleaning, and structuring data from disparate legacy systems to create a unified, model-ready data pipeline.

- Model Explainability The degree to which a model’s decision logic can be understood, audited, and justified by human reviewers a mandatory standard under FCA and PRA frameworks.

- Algorithmic Bias Systematic errors in model outputs that produce discriminatory or unfair outcomes for specific user groups, constituting a regulatory breach under FCA Consumer Duty and UK Equality Act provisions.

- Vendor Lock-In The state of dependency on a single proprietary technology provider, limiting a firm’s ability to audit, migrate, or replace its AI systems without incurring prohibitive costs.

- Human-in-the-Loop A deployment architecture that embeds mandatory human review and override capability at defined decision points within an automated AI workflow.

- MLOps Machine Learning Operations the set of practices and tooling that governs the end-to-end lifecycle of ML models, from development through deployment to ongoing monitoring and governance.